In my last blog post, I posed the question of how many hidden layers should be in a neural network, and how many hidden neurons should be in each hidden layer. This is related to the Neural Network Design, or Neural Network Architecture.

Well, I found the answer, I think, in the book entitled An Introduction to Neural Networks for Java authored by Jeff Heaton. I noticed, incidentally, that Jeff was doing AI and writing about it as early as 2008 - fifteen years ago prior to the current AI firestorm we see today - and possibly before that, using languages like Java, C# (C Sharp), and Encog (which I am unfamiliar with).

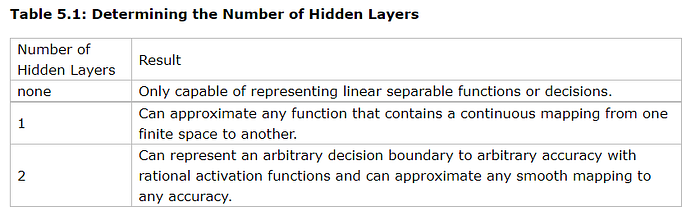

In this book, in Table 5.1 (Chapter 5), Jeff states (quoted):

"Problems that require two hidden layers are rarely encountered. However, neural networks with two hidden layers can represent functions with any kind of shape. There is currently no theoretical reason to use neural networks with any more than two hidden layers. In fact, for many practical problems, there is no reason to use any more than one hidden layer. Table 5.1 summarizes the capabilities of neural network architectures with various hidden layers."

Jeff then has the following table...

"There are many rule-of-thumb methods for determining the correct number of neurons to use in the hidden layers, such as the following:

- The number of hidden neurons should be between the size of the input layer and the size of the output layer.

- The number of hidden neurons should be 2/3 the size of the input layer, plus the size of the output layer.

- The number of hidden neurons should be less than twice the size of the input layer."

Simple - and useful! Now, this is obviously a general rule of thumb, a starting point.

There is a Goldilocks method for choosing the right sizes of a Neural Network. If the number of neurons is too small, you get higher bias and underfitting. If you choose too many, you get the opposite problem of overfitting - not to mention the issue of wasting precious and expensive computational cycles on floating point processors (GPUs).

In fact, the process of calibrating a Neural Network leads to a concept of Pruning, where you examine which Neurons affect the total output, and prune out those that don't have the measure of contribution that makes a significant difference to the end result.